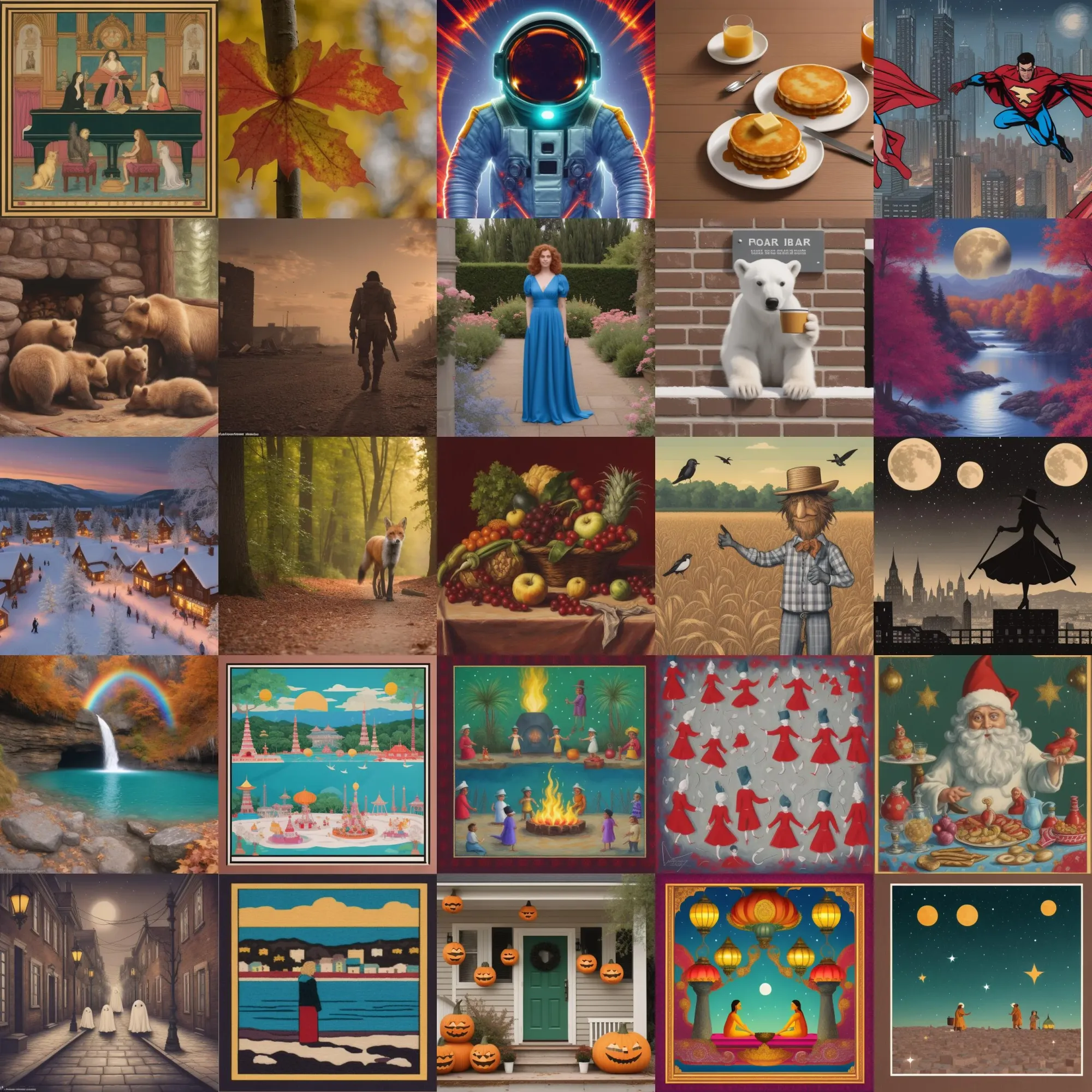

SD-Turbo scores significantly higher on aesthetics, the boost to SD-21 is remarkable

For context: I have an RTX2060 6GB and I was interested in getting usable sub-10 second generations. I’d previously made an optimised SD-21 pipe prior to SDXL. The prompts for the images below are taken from Microsoft Image Creator (which were presumably chosen to show off image models breadth of ability).

https://thekitchenscientist.github.io/dalle-3_examples.txt

For text2img you can see how much better SDXL is at grasping the prompt concepts but for those on older hardware, SD-turbo looks usable for near-real time painting using a tool like Krita. It combines nicely with Koyha’s DeepShrink and FreeU_V2 to produce 768×1024 images without artefacts in under 5 seconds. If you use the LCM sampler you can also push it past 2 steps without frying the image – it just progressively simplifies it until you have a very simple vector image.

At 4 steps (which what is used below) it fixes most of the issues with malformed limbs that occur with only 2 steps. Some very interesting stuff happens with complex prompts as you start to push SD-Turbo to 7+ steps with the LCM sampler.

SD-Turbo

SDXL-Turbo

SXDL Base

SDXL-LCM

SSD-1B LCM

SSD-1B

| Method | Seconds per image on RTX2060 6GB ComfyUI |

|---|---|

| SDXL – uni_pc_bh2 | 30 |

| SDXL LCM Lora (it seems I need to use the merge) | 60 |

| SDXL Turbo | 13 |

| SSD-1B | 18 |

| SSD-1B LCM Lora | 10 |

| SD-Turbo | 1.5 |

| SD-2.1 | 3 |

I ranked all the 2135 images I generated using the simulacra aesthetic model. For each prompt I calculated the average aesthetic across all the methods and then subtracted that from the score of each image in that group. The way SSD-1B scores higher than SDXL makes me think the simulacra aesthetic model or similar was used in the distillation process.

The average score for each prompt was subtracted from the score for each image

I used seed 1000000007, lcm sampler and sge_uniform scheduler. For turbo it was 4 steps and LCM it was 6 steps. The base images were generated with uni_pc_bh2 and 12 steps. The other two groups of prompts are available here:

https://thekitchenscientist.github.io/dalle-2_examples.txt

https://thekitchenscientist.github.io/artist-space_examples.txt

The image space examples are 244 prompts based on: https://docs.google.com/spreadsheets/d/14xTqtuV3BuKDNhLotB_d1aFlBGnDJOY0BRXJ8-86GpA/edit#gid=0 I ran 10k samples from this list using SSD-1B and then analysed the image composition and colours to sample a spreadout/diverse/representative set of artist prompts from the infinity of latent space.

Bonus plot showing the spread of scores across each group:

SD Turbo for ideation on people and landscapes; hybrid sampling for furniture, sculpture and architecture; prompt delay for keeping the same composition in multiple styles; The main thing is only using a single seed. If I want control use IPadaptor, img2img or controlNet. Some seeds are biased to split subject, etc so once I found a reliable seed, I’ve been using it for a year now.

There is a couple more tricks I’ve not mentioned here, that are not all available in Comfyui yet, that help the weaker models resolve better :

One that you can try now is to use a slow sampler for the first 15% of steps then switch to a fast method like LCM for the rest. I’ve found for architecture, furniture and sculpture in SSD-1B this gives much better results in only 10 steps (4/14slow+6/6LCM)

Originally posted on Reddit @thkitchenscientist

Discover more from Novita

Subscribe to get the latest posts sent to your email.